SD-WAN

SD-WAN (software-defined wide area network) is used to connect enterprise networks, including data centers and branch offices, that are separated by large geographic distances. SD-WAN solutions move network control into the cloud utilizing a software approach that simplifies connecting over a distance and eliminates the need for special proprietary software. These solutions are scalable, easily managed, and provide granular control on a large scale.

Benefits of SD-WAN

Transport

Independent Design

Choose the best combination of providers and connectivity for you.

Intelligent

Path Control

Automatically route network traffic to the best path for performance.

Application

Optimization

Add WAN optimization and caching to help applications run faster and more efficiently.

Secure

Connectivity

Block attacks with highly secure VPN overlay and strong encryption techniques.

One

Bill

Centralized billing

and one partner

to work with for

procurement.

Dynamic Multipoint VPN (DMVPN) Provides:

Automatic IP Security (IPsec) triggering for building an IP Sec tunnel

- Dynamic discovery of IPsec tunnel endpoints and crypto profiles; eliminates the need to configure static crypto maps defining every pair of IPsec tunnels.

Multipoint GRE (mGRE) tunnel interface

- Allows a single GRE interface to support multiple IPsec tunnels.

Dynamic Routing over VPN

- Enables IP routing tables to be securely distributed between the branch site and the corporate headend over encrypted tunnels. Allows improved reachability without needing to manually define allowed routes.

- Enhanced Interior Gateway Routing Protocol (EIGRP), Open Shortest Path First (OSPF), and Border Gateway.

- Protocol (BGP) routing protocols are supported.

Reduced Configuration Overhead

- DMVPN eliminates the need to configure crypto maps tied to the physical interface, dramatically simplifying the number of lines of configuration required for a VPN deployment (e.g., for a 1000-site deployment, DMVPN reduces the configuration effort at the hub from 3900 lines to 13 lines).

- Adding new spokes to the VPN requires no changes at the hub.

- Simplifies configuration of split tunneling. Centralized configuration change at the hub controls the split tunneling behavior. In traditional IPsec, all the spokes need to be modified.

Zero-Touch Deployment

- Cisco DMVPN can be deployed in zero-touch deployment models using Easy Secure Device Deployment for secure PKI-based device provisioning. Devices can be bootstrapped remotely, avoiding the need for extensive staging operations.

Dynamic Spoke to Spoke Tunnels

- Direct spoke to spoke tunnels eliminate the need for spoke-to-spoke traffic to traverse the hub.

- Reduces latency for voice over IP (VoIP) deployments over DMVPN and improves effective throughput of the hub router.

- Tunnels are created dynamically when required and torn down after use, allowing the system to scale better (i.e., smaller spokes can participate in the virtual full mesh).

Dynamic Addressing for Spoke Routers

- Spoke routers can use dynamic IP addresses, a frequent requirement for Internet connections over cable and DSL.

Network Address Translation (NAT) Traversal

- DMVPN supports spoke routers running NAT or behind dynamic NAT devices, enabling enhanced security for branch subnets.

IP Multicast Support

- DMVPN supports IP Multicast traffic (between hub and spokes); native IPsec supports only IP Unicast. This provides efficient and scalable distribution of one-to-many and many-to-many traffic.

QoS Support Cisco DMVPN supports the following advanced QoS mechanisms:

- Traffic shaping at hub interfaces on a per-spoke or per-spoke-group basis.

- Hub-to-spoke and spoke-to-spoke QoS policies.

- Dynamic QoS policies wherein QoS templates are attached automatically to tunnels as they come up.

- Per-spoke QoS policing, allowing spokes to be differentiated, and protecting the network from being overrun by bandwidth hungry spokes.

High Availability

- Cisco DMVPN enables routing-based failover.

- Dual WAN links and hub redundancy provide higher availability. DMVPN supports dual-hub designs, where each spoke is peered with two hubs, providing rapid failover.

- Multiple hub topologies allow uninterrupted spoke-to-spoke communication in the event of any single hub failure.

Scalability

- DMVPN scales to thousands of spokes using server load balancing (SLB). Encryption can be integrated within the SLB device or distributed to dedicated headend VPN routers. Tunnels are load balanced over available hubs.

- Performance can be scaled incrementally by adding hubs.

- Hierarchical hub deployments allow enhanced scalability.

Manageability

- Manageability support is provided through IPsec (including VRF-aware IPsec) MIB, NHRP MIB, and command-line interface (CLI).

- Next Hop Resolution Protocol (NHRP).

- Allows spokes to be deployed with dynamically assigned public IP addresses.

VRF Awareness

- VRF-aware DMVPN deployed at the provider edge hubs allows segregation of customer traffic.

Multiprotocol Label Switching (MPLS) Support (2547oDMVPN)

- MPLS networks can be encrypted over DMVPN tunnels.

Performance Base Routing (PfRv3) – Load Balancing

- PfR lets enterprises fully use WAN investments and avoid oversubscription of lines. The growth of cloud traffic, guest services, and video can easily be load balanced across all WAN paths.

Automatic Performance Optimization

- Reduces engineering operating expenses associated with manual network performance analysis and tuning of the routing infrastructure

- Ensure that mission-critical applications perform with the speed, availability, and reliability required for business success. Let business policies guide network traffic at the application level instead of the traditional IP prefix-based routing.

Brownout Response

- Automatic detection of network problems and fast routing around poorly performing paths (within 2 seconds) maintains optimal application performance.

Blackout Response

- Active detection of and routing around “black hole” conditions in the network (within 1 second) helps minimize the effects of network outages. Deliver up to 99. 999-percent uptime over any transport, such as MPLS, Internet, or hybrid

WAN deployments.

Granular Site by Site Control

- Scale to branch offices over any transport. Scale to thousands of sites (tens of thousands of traffic classes) without stacking deployments. Maintain granular control from the branch office to the data center and out to the public cloud.

Smart Sensing

- Use smart sensing, which turns off probing when it senses real traffic on the WAN links, also improving scalability.

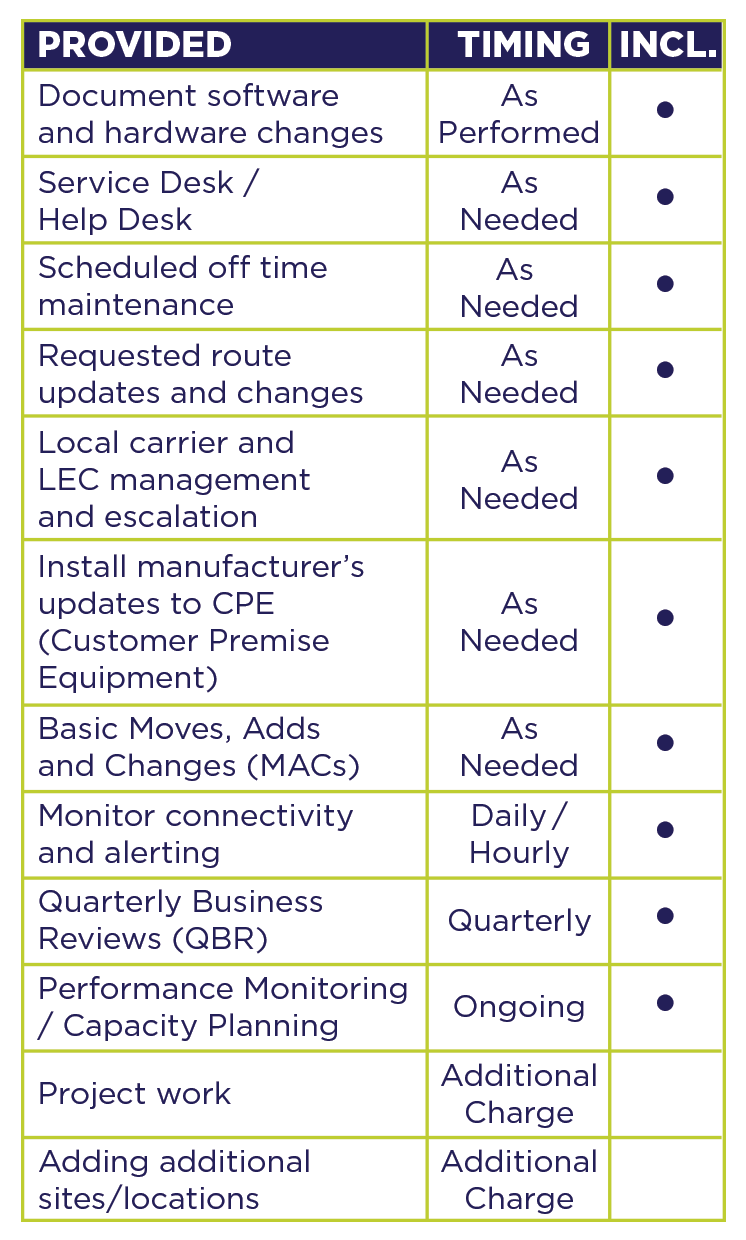

Versa SD-WAN as a Service – SaaS Overview

PROVIDED |

TIMING |

INCLUDED |

| Document software and hardware changes | As Needed | • |

| Service Desk / Help Desk | As Needed | • |

| Scheduled off time maintenance | As Needed | • |

| Requested route updates and changes | As Needed | • |

| Local carrier and LEC management and escalation | As Needed | • |

| Install manufacturer’s updates to CPE (Customer Premise Equipment) |

As Needed | • |

| Basic Moves, Adds and Changes (MACs) | As Needed | • |

| Monitor connectivity and alerting | Daily/Hourly | • |

| Quarterly Business Reviews (QBR) | Quarterly | • |

| CPerformance Monitoring / Capacity Planning | Ongoing | • |

| Project work | Additional Charge | • |

| Adding additional sites/locations | Additional Charge | • |

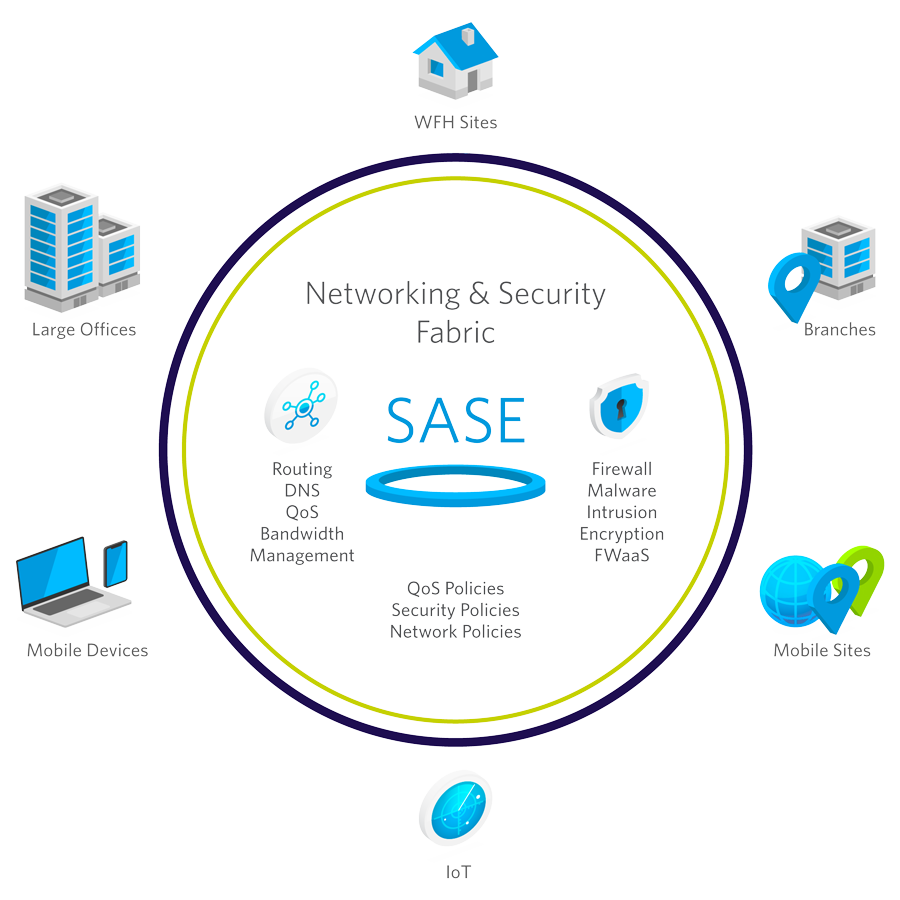

What is SASE (Secure Access Service Edge)?

SASE (Secure Access Service Edge), pronounced “sassy”, is a cloud-native technology that Gartner defined in 2019. SASE establishes network security as an integral, embedded function of the network fabric.

SASE supplants legacy services offered by single-purpose point-solutions located in location-locked corporate premises such as data centers.

Learn about the business use case and technical background of Secure Access Service Edge (SASE).

Secure Access Service Edge (SASE) for Dummies

Downloadable E-Book

Download our free e-book to learn about the business and technical background of SASE (Secure Access Service Edge) including best practices, real-life customer deployments, and the benefits that come with a SASE enabled organization.

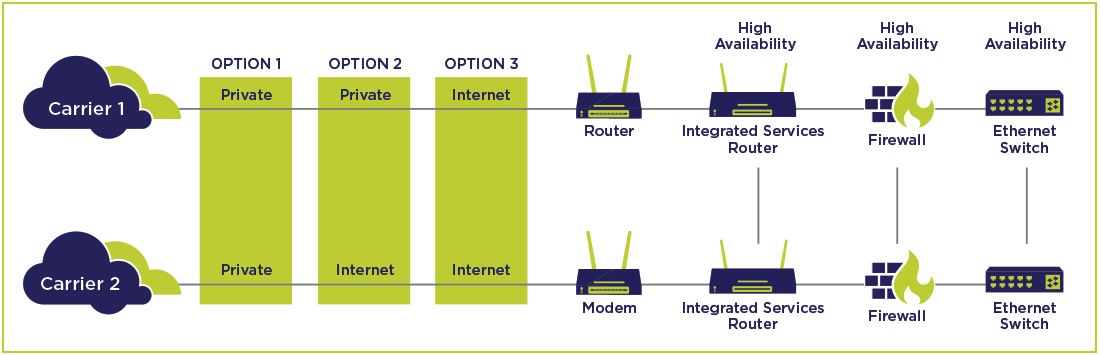

WAN Link Optimization

High-availability is enabled by automatically detecting the failure of a WAN link or site and redirecting traffic to working links – to provide users with continuous service. WAN link controllers can also be configured in a high-availability mode with one WAN link controller acting as the primary, and a second WAN link controller as a hot standby.

By bundling (aggregating) multiple, diverse Internet links from one or more ISPs, a WAN link controller reduces the need to purchase multiple and expensive high-speed links. This enables you to increase bandwidth by using cost-effective links without compromising up-time. In addition to managing scalability and redundancy, you can cost-effectively utilize all available WAN bandwidth through intelligent link load balancing. WAN link controllers provide controls for how bandwidth is used to support applications and connectivity. This allows you to take advantage of the most cost-effective ISP rates while ensuring appropriate levels of bandwidth are available for specific applications.

The performance of applications over the WAN directly affects response time. This includes not just total average transaction time but assures that users located at performance-challenged sites (such as overseas branch offices) receive an acceptable level of performance. Performance is an important criterion for all networking equipment, but it is critical for a device such as a WAN link controller, as datacenters are central points of aggregation. As such, the WAN link controller needs to support high volumes of traffic delivered between sites. A simple definition of performance is how many bits-per-second the device can support. While this is extremely important, in the case of WAN link controllers, other key measures of performance such as how many WAN links can be supported simultaneously.

Network access and secure delivery of applications over the WAN is vital. Network security addresses key elements specific to applications going over the network, such as required levels of encryption, authentication, and maximum reasonable usage. Encrypted traffic tunnels behave differently on the network than clear text.

Scaling of applications delivered over the WAN is a critical consideration. It is important to understand how many users can use available network resources without having to spend large amounts of money to upgrade the network. It also affects how the network performs when a new version of software is deployed, etc. Performance requirements for accessing datacenter applications and data resources are usually characterized in terms of both the aggregate throughput of the WAN link controller and the number of simultaneous sessions that can be supported.

WAN link controllers should have an easy-to-use and initiative web interface for managing themselves and the WAN infrastructure they affect.